In our previous blog post we introduced the redesigned-from-scratch retrievers framework, which enables the creation of complex ranking pipelines. We also explored how the Reciprocal Rank Fusion (RRF) retriever enables hybrid search by merging results from different queries. While RRF is easy to implement, it has a notable limitation: it focuses purely on relative ranks, ignoring actual scores. This makes fine-tuning and optimization a challenge.

Meet the linear retriever!

In this post, we introduce the linear retriever, our latest addition for supporting hybrid search! Unlike rrf, the linear retriever calculates a weighted sum across all queries that matched a document. This approach preserves the relative importance of each document within a result set while allowing precise control over each query’s influence on the final score. As a result, it provides a more intuitive and flexible way to fine-tune hybrid search.

Defining a linear retriever where the final score will be computed as:

It is as simple as:

GET linear_retriever_blog/_search

{

"retriever": {

"linear": {

"retrievers": [

{

"retriever": {

"knn": {

...

}

},

"weight": 5

},

{

"retriever": {

"standard": {

...

}

},

"weight": 1.5

},

]

}

}

}Notice how simple and intuitive it is? (and really similar to rrf!) This configuration allows you to precisely control how much each query type contributes to the final ranking, unlike rrf, which relies solely on relative ranks.

One caveat remains: knn scores may be strictly bounded, depending on the similarity metric used. For example, with cosine similarity or the dot product of unit-normalized vectors, scores will always lie within the [0, 1] range. In contrast, bm25 scores are less predictable and have no clearly defined bounds.

Scaling the scores: kNN vs BM25

One challenge of hybrid search is that different retrievers produce scores on different scales. Consider for example the following scenario:

Query A scores:

| doc1 | doc2 | doc3 | doc4 | |

|---|---|---|---|---|

| knn | 0.347 | 0.35 | 0.348 | 0.346 |

| bm25 | 100 | 1.5 | 1 | 0.5 |

Query B scores:

| doc1 | doc2 | doc3 | doc4 | |

|---|---|---|---|---|

| knn | 0.347 | 0.35 | 0.348 | 0.346 |

| bm25 | 0.63 | 0.01 | 0.3 | 0.4 |

You can see the disparity above: kNN scores range between 0 and 1, while bm25 scores can vary wildly. This difference makes it tricky to set static optimal weights for combining the results.

Normalization to the rescue: the MinMax normalizer

To address this, we’ve introduced an optional minmax normalizer that scales scores, independently for each query, to the [0, 1] range using the following formula:

This preserves the relative importance of each document within a query’s result set, making it easier to combine scores from different retrievers. With normalization, the scores become:

Query A scores:

| doc1 | doc2 | doc3 | doc4 | |

|---|---|---|---|---|

| knn | 0.347 | 0.35 | 0.348 | 0.346 |

| bm25 | 1.00 | 0.01 | 0.005 | 0.000 |

Query B scores:

| doc1 | doc2 | doc3 | doc4 | |

|---|---|---|---|---|

| knn | 0.347 | 0.35 | 0.348 | 0.346 |

| bm25 | 1.00 | 0.000 | 0.465 | 0.645 |

All scores now lie in the [0, 1] range and optimizing the weighted sum is much more straightforward as we now capture the (relative to the query) importance of a result instead of its absolute score and maintain consistency across queries.

Linear retriever example

Let’s go through an example now to showcase what the above looks like and how the linear retriever addresses some of the shortcomings of rrf. RRF relies solely on relative ranks and doesn’t consider actual score differences. For example, given these scores:

| doc1 | doc2 | doc3 | doc4 | |

|---|---|---|---|---|

| knn | 0.347 | 0.35 | 0.348 | 0.346 |

| bm25 | 100 | 1.5 | 1 | 0.5 |

| rrf score | 0.03226 | 0.03252 | 0.03200 | 0.03125 |

rrf would rank the documents as:

However, doc1 has a significantly higher bm25 score than the others, which rrf fails to capture because it only looks at relative ranks. The linear retriever, combined with normalization, correctly accounts for both the scores and their differences, producing a more meaningful ranking:

| doc1 | doc2 | doc3 | doc4 | |

|---|---|---|---|---|

| knn | 0.347 | 0.35 | 0.348 | 0.346 |

| bm25 | 1 | 0.01 | 0.005 | 0 |

As we can see in the above, doc1’s great ranking and score for bm25 is properly accounted for and reflected on the final scores. In addition to that, all scores lie now in the [0, 1] range so that we can compare and combine them in a much more intuitive way (and even build offline optimization processes).

Putting it all together

To take full advantage of the linear retriever with normalization, the search request would look like this:

GET linear_retriever_blog/_search

{

"retriever": {

"linear": {

"retrievers": [

{

"retriever": {

"knn": {

...

}

},

"weight": 5

},

{

"retriever": {

"standard": {

...

}

},

"weight": 1.5,

"normalizer": "minmax"

},

]

}

}

}This approach combines the best of both worlds: it retains the flexibility and intuitive scoring of the linear retriever, while ensuring consistent score scaling with MinMax normalization.

As with all our retrievers, the linear retriever can be integrated into any level of a hierarchical retriever tree, with support for explainability, match highlighting, field collapsing, and more.

When to pick the linear retriever and why it makes a difference

The linear retriever:

- Preserves relative importance by leveraging actual scores, not just ranks.

- Allows fine-tuning with weighted contributions from different queries.

- Enhances consistency using normalization, making hybrid search more robust and predictable.

Conclusion

The linear retriever is already available on Elasticsearch Serverless, and the 8.18 and 9.0 releases! More examples and configuration parameters can also be found in our documentation. Try it out and see how it can improve your hybrid search experience — we look forward to your feedback. Happy searching!

Ready to try this out on your own? Start a free trial.

Want to get Elastic certified? Find out when the next Elasticsearch Engineer training is running!

Related content

March 31, 2026

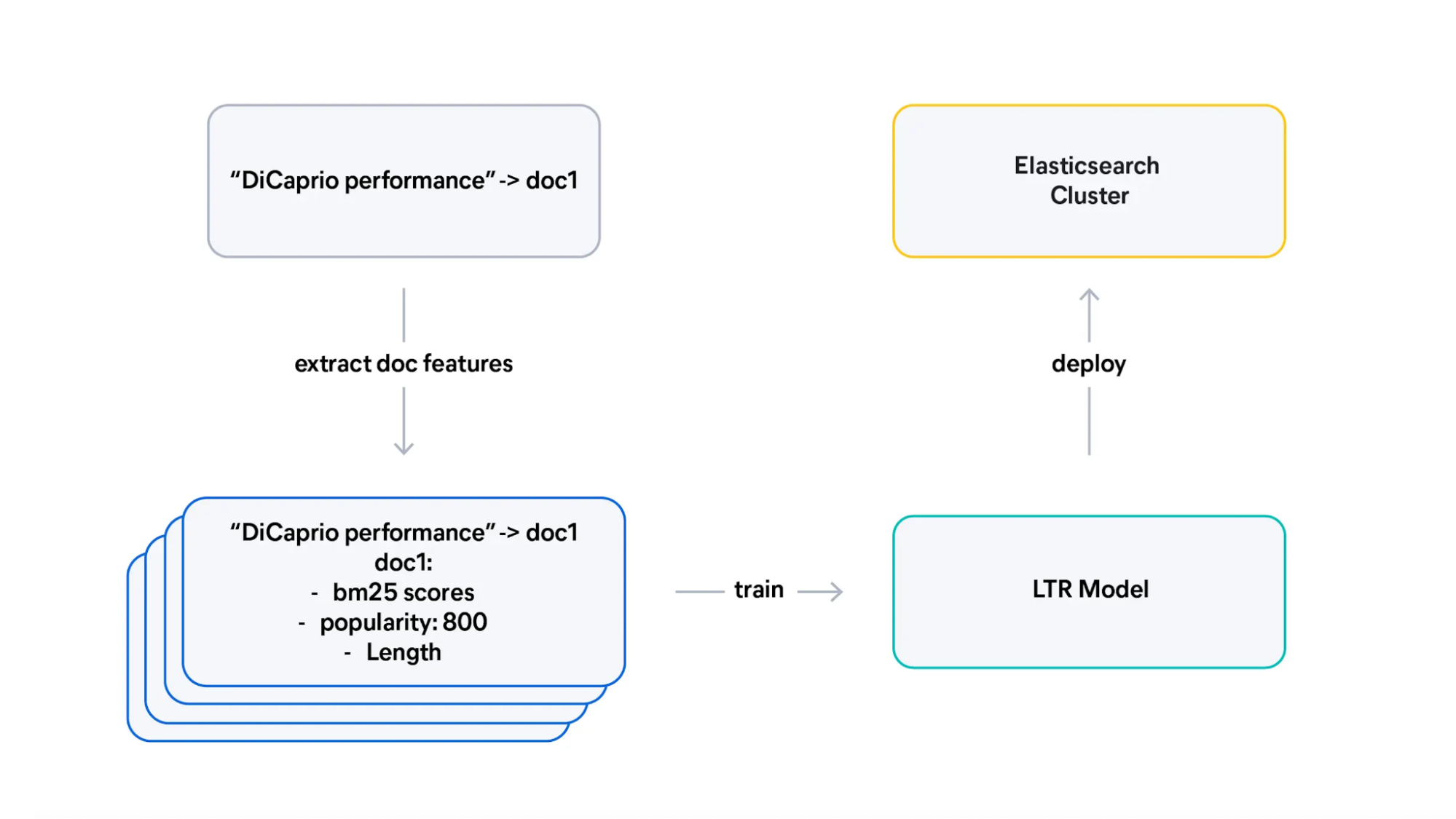

From judgment lists to trained Learning to Rank (LTR) models

Learn how to transform judgment lists into training data for Learning To Rank (LTR), design effective features, and interpret what your model learned.

March 5, 2026

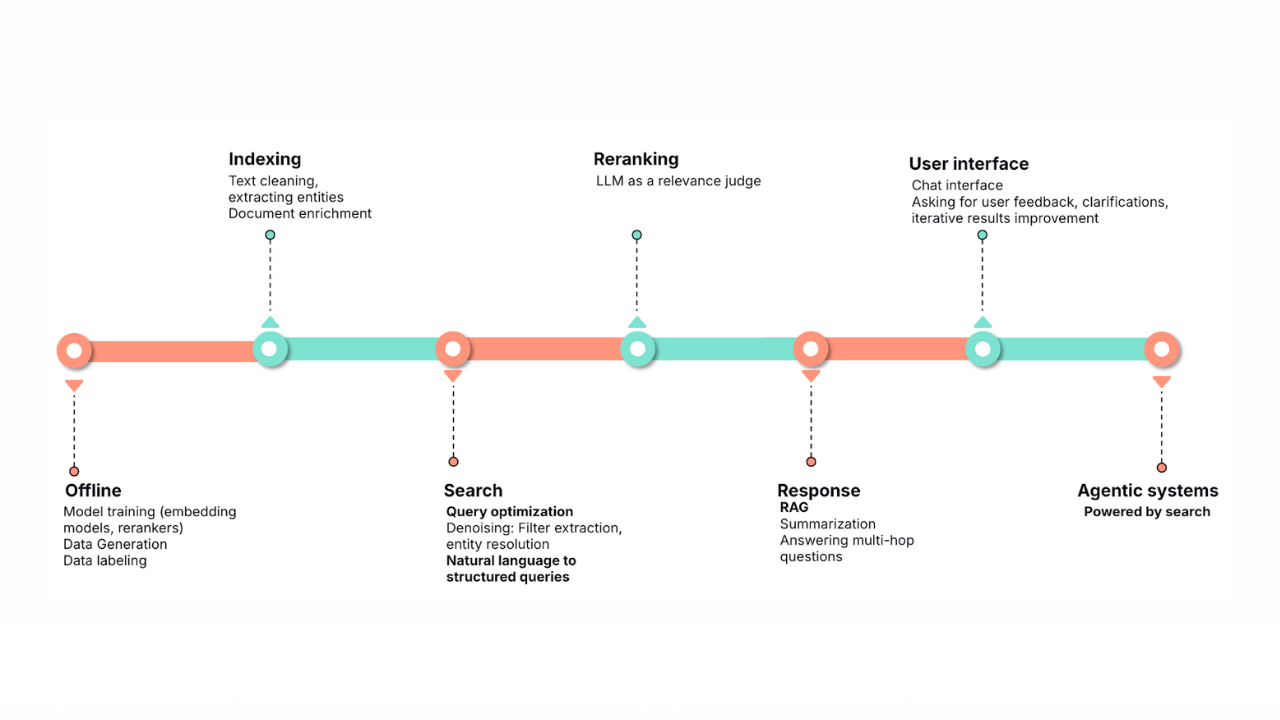

Does MCP make search obsolete? Not even close

Explore why search engines and indexed search remain the foundation for scalable, accurate, enterprise-grade AI, even in the age of MCP, federated search, and large context windows.

February 20, 2026

Ensuring semantic precision with minimum score

Improve semantic precision by employing minimum score thresholds. The article includes concrete examples for semantic and hybrid search.

February 17, 2026

An open‑source Hebrew analyzer for Elasticsearch lemmatization

An open-source Elasticsearch 9.x analyzer plugin that improves Hebrew search by lemmatizing tokens in the analysis chain for better recall across Hebrew morphology.

January 30, 2026

Query rewriting strategies for LLMs and search engines to improve results

Exploring query rewriting strategies and explaining how to use the LLM's output to boost the original query's results and maximize search relevance and recall.